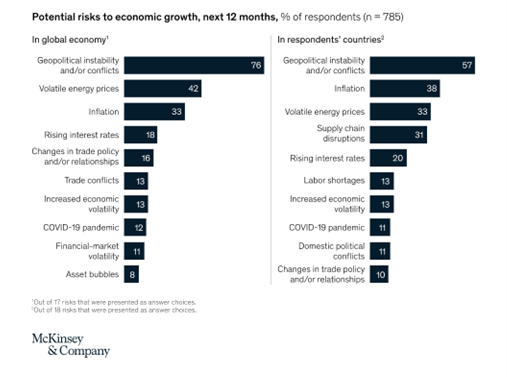

A recent McKinsey survey uncovered the risks that a consensus of executives is worried about for 2022 and beyond. Inflation, energy prices, and geopolitical conflict were among the top 10 concerns.

As an executive myself, this list feels accurate to me. Some of these issues – labor shortages, supply chain disruption, and inflation – have a more direct impact than others on the list. For Whitehat and the companies that rely on our technology guidance, what can we do about any of it? What tools are at our disposal to help mitigate the risk some of these issues pose?

With challenging times like these, I am looking to preserve cash, maintain access to capital to fuel growth through any economic difficulty, reduce operational expenses where possible, retain the talent we have, and make sure we can continue to attract team members to meet customer demand.

With challenging times like these, I am looking to preserve cash, maintain access to capital to fuel growth through any economic difficulty, reduce operational expenses where possible, retain the talent we have, and make sure we can continue to attract team members to meet customer demand.

These could be goals for any year, but it is clear that this year is shaping up to be unlike any other in recent memory. Even with solid growth, I am a bit more conservative in practice, building reserves to navigate uncertainty and maybe even capitalize on an opportunity.

To reach some of these objectives, I found myself looking in at least one place where I don't usually look for assistance in helping mitigate risk—our technology stack.

Desktop and application virtualization in all its forms is a technology we and many of our customers have used. This technology recently caught my eye as it offers up some interesting tools to mitigate some of the risks the McKinsey survey identified.

Here are seven that appeal to both the CEO and CIO in me to create exciting possibilities for direct savings, improved efficiency, and attracting and retaining employees.

#1 – Reduce IT support costs by reducing complexity and limiting the need to send IT resources onsite. Solving IT support issues through automation is the least expensive way to solve an IT issue. Self-service, where the end-user can remedy their problem, is next, with costs going up in terms of time and payroll dollars (with hourly support staff) as we move through Remote Support, where the help desk personnel engage for the first time to solve the problem.

If we can't solve it remotely, Support has to go desk-side to solve the issue or get in a truck and drive to the person having the problem. Each step takes more time and raises the cost of supporting end-users.

With application or desktop virtualization, the desktops and applications never leave the data center. So, Support for remote offices, retail locations, field staff, or the “Work from Home” crowd is limited to far fewer elements that can go wrong, or that can need Support. We can centralize patching and updates to 1-3 images in the data center, eliminating the need to touch every end device every month.

The more we can automate, the faster we can resolve support issues, and the more time can be focused on projects and making the company better, which is a sign that we are maximizing our IT support resources. We can spend more time and dollars on improving the organization and helping it move forward, rather than being forced to spend looking backward, repairing what is already in place.

While not directly related to virtual applications or virtual desktops, driving efficiency, automation, and optimization at the support desk have created incredible cost savings for the environments we manage. Correcting support desk efficiencies has become one of our first points of focus when helping straighten out any support organization.

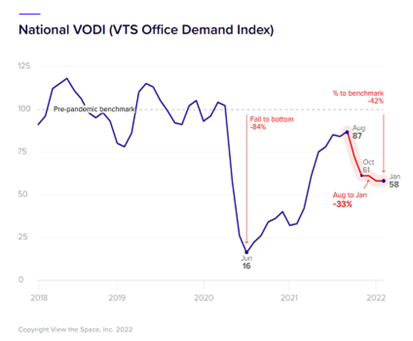

#2 – Reduce office footprint by allowing some percentage of staff to work from home. Reviewing the chart below from the VTS Office Demand Index (VODI) Report-February 2022*, it is apparent that many companies are executing a strategy, reducing their office space footprint to about 58 percent of pre-pandemic levels.

*The VTS Office Demand Index (VODI) measures new demand for office space, i.e., the amount of office space reflected by new requirements from prospective tenants in a given month.

While Whitehat is almost entirely remote, desktop and application virtualization make it more practical to keep employees engaged and productive outside of the office, anywhere there is an Internet connection. The caveat to this is that the environment needs to be built well and maintained so end-users can get a PC-like experience.

Hoteling models are becoming popular again, where employees only come into the office a few days a week, in rotation with other employees, using hot desks or hoteling. Employees use common desks while they are in the office and tidy them up before they leave, making room for the next employee.

Anyone who has provided Support to users working from home knows that the added number of variables in a home environment that can impact an end-user's ability to work can be significant. However, there are tools and techniques to mitigate the support overhead that can come with work from home staff.

#3 – Reduce power with fewer people in the office. If there are fewer people in the office, and less office space, then it makes sense that the power draw would drop with fewer end-users, computers, servers, and infrastructure onsite.

#4 – Lower power cost, up to one-third for VDI IT infrastructure. From our testing and work done by some of our customers, application and desktop virtualization can drop power consumption to one-third of the power vs. an infrastructure primarily made up of PCs.

#5 – Improved hiring pool by being able to hire the best person anywhere. Virtual applications and desktops enable employees to work from anywhere there is a good internet connection, which means the hiring pool for new employees can potentially expand beyond anyone within a 45-minute commute of an office.

#6 – Improve benefits and put real cash in employees' pockets. Enabling employees to work from home full or part-time is a benefit that saves the company and puts cash directly back into employees' pockets with reduced commuting costs, clothing/dry cleaning costs, and reduced food costs from eating out at lunch.

#7 – Moving to a consumption model for virtual applications and desktops. Virtual application and desktop infrastructure can be consumed in an as-you-go model. Between AWS, Azure, co-location providers like Whitehat, or Whitehat's Titanium VDI-on-a-Box appliance model, capital can be preserved and interest avoided by paying for the virtual desktops and apps you use on a monthly, as-you-go basis.

The McKinsey study reflects the sentiment that things will worsen before they get better. According to the survey, forty-three percent of those surveyed believe the global economy will improve over the next six months, almost equaling the 40 percent who think conditions will worsen.

Are virtual apps or virtual desktops the definitive cure for the dicey times ahead? No. Virtual desktops, as a technology, are simply another tool to use to manage cost, create productivity, minimize risk, and seek a competitive advantage. VDI offers some unique capabilities that can give a business superpowers if adequately leveraged.

Lessons Learned from a Pandemic: Three mistakes to avoid with VDI

Lesson 1: Pay attention to end-user experience. There is a significant gap between building a work-from-anywhere solution that works and a work-from-home solution that provides a great end-user experience. Employees have options and giving them a poor set of tools to work with that limits their ability to do their job or make is unnecessarily painful could go find someone else to work for that can enable them with the tools they need to be as productive as they can be.

Lesson 2: The work-from-home solution that got you through 2020 and 2021 might not be the work-from-home solution that should be in place permanently in 2022 and beyond. There is a difference between having tools that work and having tools that work well. Get proactive, talk to end-users and make sure their work experience meets an acceptable standard.

Lesson 3: Lone contributors tend to be more successful in a work-from-home environment than team members that work in groups or that require heavy collaboration to get their work done. Look at the communication tools available to gauge their adequacy replicating the in-office collaborative work experience.Whitehat Virtual is technology rocket fuel for companies that depend on technology to grow. Clients benefit instantly from the collective experiences gained managing thousands of end-users worldwide, blazing new trails following the well-traveled paths with those that have been there before.

With every ticket we close, every issue root-cause we identify, every process we set in place or project we complete, Whitehat gets better and better at fulfilling our purpose.

This is Whitehat Virtual Technologies This is who we are. This is what you could be a part of. Discover how Whitehat Virtual can help you realize the benefits of a managed IT services model here, then reach out to see how we can help.

Leave Comment